- Science

Artificial Intelligence tool developed to predict the structure of the Universe

The origin of how the Universe created its voids and filaments can now be studied within seconds after researchers developed an artificial intelligence tool called Dark Emulator.

Advancements in telescopes have enabled researchers to study the Universe with greater detail, and to establish a standard cosmological model that explains various observational facts simultaneously. But there are many things researchers still do not understand. Remarkably, the majority of the Universe is made up of dark matter and dark energy, of which no one has been able to identify its nature. A promising avenue to solve these mysteries is the structure of the Universe. Today’s Universe is made up of filaments where galaxies cluster together and look like threads from far away, and voids where there appears to be nothing. The discovery of the cosmic microwave background has given researchers a snapshot of what the Universe looked like close to its beginning, and understanding how its structure evolved to what it is today would reveal valuable characteristics about what dark matter and dark energy is.

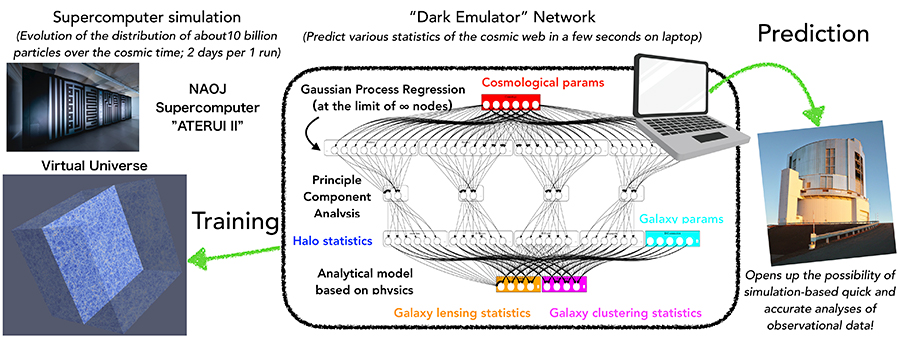

A team of researchers, including Kyoto University Yukawa Institute for Theoretical Physics Project Associate Professor Takahiro Nishimichi, and Kavli Institute for the Physics and Mathematics of the Universe (Kavli IPMU) Principal Investigator Masahiro Takada, used the world’s fastest astrophysical simulation supercomputers ATERUI and ATERUI II to develop the Dark Emulator. Using the emulator on data recorded by several of the world’s largest observational surveys allows researchers to study possibilities concerning the origin of cosmic structures, and how dark matter distribution could have changed over time.

“We built an extraordinarily large database using a supercomputer, which took us three years to finish, but now we can recreate it on a laptop in a matter of seconds. I feel like there is great potential in data science. Using this result, I hope we can work our way towards uncovering the greatest mystery of modern physics, which is to uncover what dark energy is. I also think this method we’ve developed will be useful in other fields such as natural sciences or social sciences, ” says lead author Nishimichi.

This tool uses an aspect of artificial intelligence called machine learning. By changing several important characteristics of the Universe, such as those of dark matter and dark energy, ATERUI and ATERUI II have created hundreds of virtual Universes. Dark Emulator learns from the data, and guesses outcomes for new sets of characteristics without having to create entirely new simulations every time. When testing the resulting tool with real life surveys, it was able to successfully predict weak gravitational lensing effects in the Hyper Suprime-Cam survey, along with the three-dimensional galaxy distribution patterns recorded in the Sloan Digital Sky Survey to within 2 to 3 per cent accuracy, in a matter of seconds. In comparison, running simulations individually through a supercomputer without the AI, would take several days.

The researchers hope to apply their tool using data from upcoming surveys in the 2020s, enabling deeper studies of the origin on the Universe.

Details of their study were published in the Astrophysical Journal on 8 October, 2019.

Related Links

- Artificial Intelligence tool developed to predict the structure of the Universe (Center for Computational Astrophysics)

- Artificial Intelligence tool developed to predict the structure of the Universe (Yukawa Institute for Theoretical Physics, Kyoto University)

- Artificial Intelligence tool developed to predict the structure of the Universe (Kavli IPMU)